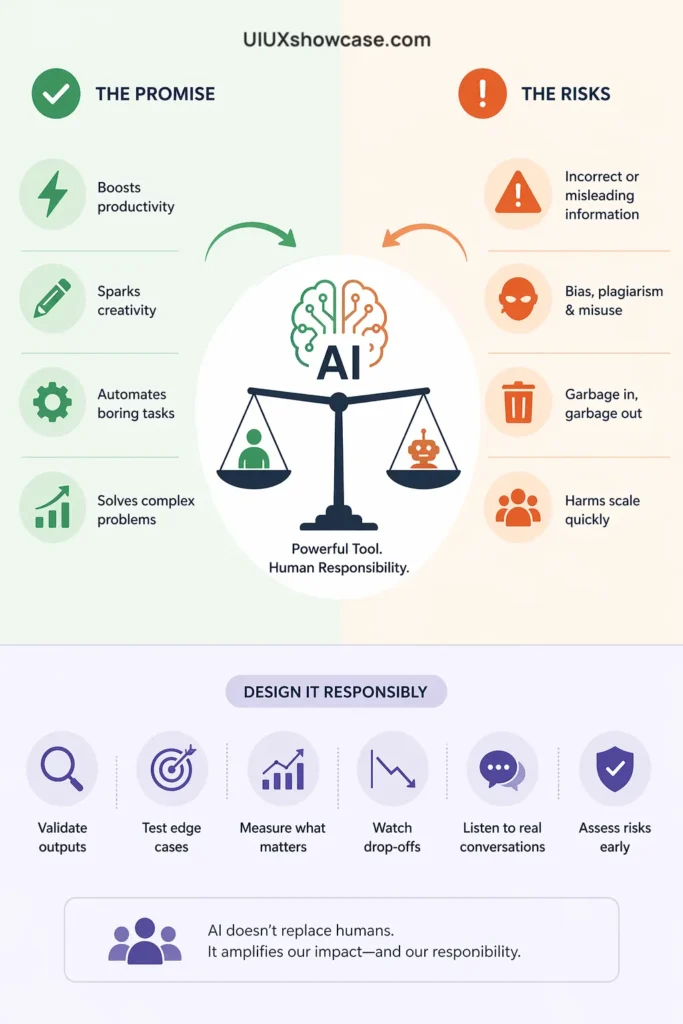

AI is transforming UX design—but not without risks. Explore the real benefits, hidden challenges, and what designers must consider when building AI-powered products responsibly.

Artificial intelligence—AI, as we casually call it—is having a serious moment right now.

Tools like ChatGPT, Lensa, and DALL·E 2 have quickly moved from niche experiments to everyday tools people actually rely on.

But here’s the interesting part—it’s not all excitement. Alongside the praise, there’s a growing sense of hesitation.

Questions around content accuracy, plagiarism, bias, and misuse keep popping up, and they’re not easy to ignore.

In a recent discussion, Susan Farrell, Principal UX Researcher at mmhmm, reflects on her experience studying chatbots and AI-powered products.

She breaks down what AI really is (and what it isn’t) while also highlighting the practical—and often overlooked—things teams should consider before bringing AI into their products.

AI in UX: The Exciting Promise… and the Uncomfortable Truth Behind It

Let’s be honest for a second.

AI feels a bit like magic right now. One minute you’re typing a prompt into ChatGPT, and the next—you’ve got code, content, ideas, even design suggestions. It’s fast. Almost too fast.

But if you’ve spent even a little time working in UX, you probably feel that quiet tension in the background.

Like… this is powerful, but is it reliable? Is it safe? Are we moving too fast?

That tension? It’s valid.

A recent discussion on the Nielsen Norman Group podcast explores exactly this—cutting through the hype and asking the harder questions about AI in design and product development.

And honestly, the answers aren’t black and white.

🤖 First things first: AI isn’t what you think it is

Here’s the thing—and this might sound a bit disappointing.

AI isn’t actually intelligent.

At least, not in the way we imagine.

It doesn’t think. It doesn’t understand. It doesn’t have intent.

What it really does is spot patterns, remix data, and generate outputs based on what it has seen before.

So when ChatGPT writes something that feels human…

…it’s not thinking like a human.

It’s predicting what a human might say next.

That distinction matters more than it seems.

Because once you realize this, a lot of AI’s limitations suddenly make sense.

⚡ The part everyone loves: speed, automation, and “less boring work.”

Let’s not pretend there aren’t real benefits.

AI is genuinely useful.

- It speeds up repetitive tasks

- It helps generate first drafts (content, code, ideas)

- It assists with translation, transcription, and accessibility

- It even supports serious fields like healthcare—think cancer detection or drug discovery

And for designers?

It’s like having a junior assistant who never sleeps.

Need wireframe ideas? Done.

Need microcopy suggestions? Done.

Need variations? Done, done, done.

It reduces what Susan Farrell calls “drudgery”—the boring, repetitive stuff we all secretly hate.

But—and there’s always a but—

⚠️ The quiet problem: it looks smarter than it is

This is where things get tricky.

AI doesn’t just generate content.

It generates convincing content.

And that’s dangerous.

Because it creates an illusion of correctness.

You read something and think, “Yeah, that sounds right.”

But is it?

AI can:

- Invent facts

- Misrepresent sources

- Reinforce bias

- Produce harmful or inappropriate outputs

And it does all of this with confidence.

No hesitation. No warning.

That’s not intelligence—it’s pattern prediction without accountability.

🧠 “Garbage in, garbage out” — still painfully true

You know that old computing rule?

It hasn’t gone anywhere.

If the data going into AI systems is flawed, biased, incomplete, or messy…

The output will be too.

And here’s the uncomfortable part:

Many modern AI systems are trained on large amounts of data from the internet.

Which means:

- Bias gets amplified

- Misinformation spreads

- Mediocre content feeds more mediocre content

It’s a loop. And not always a good one.

👀 Humans in the loop (yes, still)

There’s another layer most people don’t see.

AI isn’t as autonomous as it appears.

Behind many systems, there are still humans:

- Labeling data

- Moderating content

- Correcting outputs

- Filling in gaps when the system fails

Sometimes these roles are hidden. Sometimes they’re underpaid.

And sometimes… they’re working at machine speed, which is not exactly humane.

It reminds me of the classic “Wizard of Oz” UX testing method—where a system looks automated, but a human is secretly running the show.

Except now, it’s not just testing. It’s real products.

⚖️ The biggest UX dilemma: control vs flexibility

Here’s a design trade-off that keeps coming up.

When building AI systems, teams often have to choose:

- Option A: A controlled system (predictable, safe, limited)

- Option B: A flexible system (creative, powerful, unpredictable)

You can’t fully have both.

A tightly controlled chatbot might be boring—but safe.

A powerful language model might be impressive—but risky.

And honestly? Most users expect both.

That’s where expectations break.

💥 The real risk: scale

Traditional design mistakes affect users one interaction at a time.

AI mistakes?

They scale instantly.

One flawed system can generate:

- Thousands of misleading articles

- Endless biased outputs

- Massive misinformation waves

And it happens fast. Faster than teams can react.

That’s why risk assessment isn’t optional anymore—it’s foundational.

🤔 So… are the benefits worth it?

Short answer?

Sometimes.

AI brings real value. No doubt.

But it also introduces:

- Ethical concerns (data ownership, consent)

- Legal uncertainty (copyright, IP issues)

- Social risks (deepfakes, manipulation, trust erosion)

So it’s not a clean trade-off.

It’s more like a balancing act—one that’s still evolving.

🧑💻 What UX designers should actually do (practically speaking)

Let’s bring this back to your world.

If you’re designing AI-driven products, a few grounded takeaways:

1. Don’t trust output blindly

Always validate. Always question.

2. Test edge cases—not just happy paths

What happens when things go wrong?

3. Measure more than engagement

High engagement could mean users are frustrated rather than satisfied.

4. Pay attention to drop-offs

The people who leave early often reveal the biggest issues.

5. Listen to your product

Forums, reviews, social media—people are talking. You should be listening.

6. Ask uncomfortable questions early

What could go wrong? Who could be harmed?

Honestly, this part isn’t new UX.

It’s just… more important now.

🧩 A small reality check (and maybe a relief)

Despite all the hype—

AI isn’t replacing humans anytime soon.

It still needs:

- Guidance

- Oversight

- Correction

- Judgment

In fact, if anything, it increases the need for thoughtful designers.

Because now, you’re not just designing interfaces.

You’re shaping systems that act, respond, and influence at scale.

🧠 Final thought

AI isn’t magic.

It’s a tool. A powerful one, yes—but still a tool.

And like any tool, it reflects the people who build it.

So the real question isn’t:

“Will AI change UX?”

That’s already happening.

The better question is:

Will we design it responsibly—or just quickly?

And honestly… that choice is still ours.