Tencent Hunyuan – 3D World Models is an AI-powered platform that showcases Tencent’s generative 3D capabilities.

Hunyuan World Model 1.5 — Real-Time Interactive 3D World Generation, Redefined

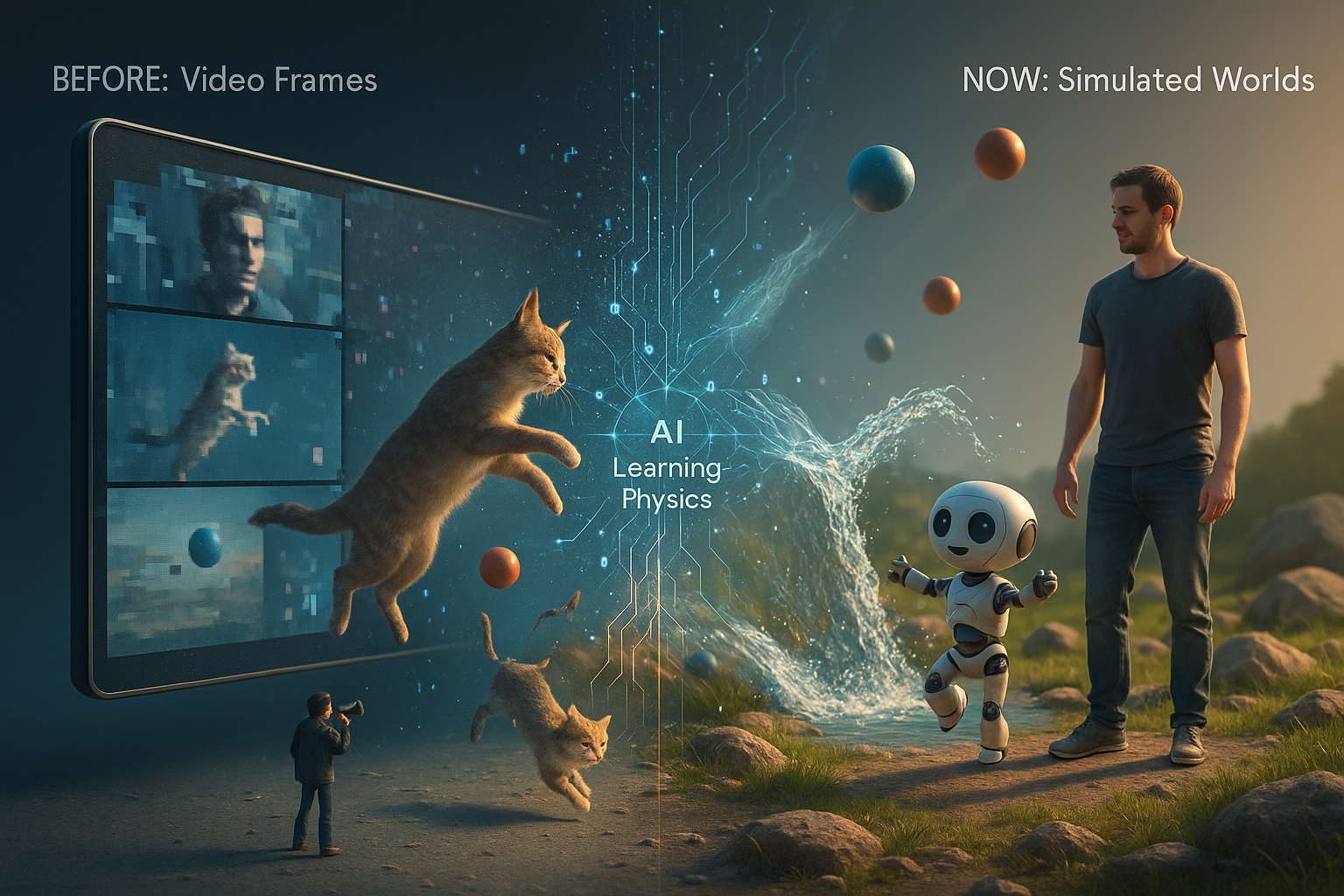

The future of interactive environments is no longer limited to pre-rendered assets or static simulations.

With Hunyuan World Model 1.5, Tencent introduces a powerful leap into real-time, AI-driven world modeling—where environments are generated, controlled, and evolved dynamically.

This open-source ecosystem—featuring Hunyuan World Model 1.0, Hunyuan World Reconstruction Model, and Voyager (a mixed-world model)—represents a growing suite of tools designed to push the boundaries of 3D generation, simulation, and interaction.

🚀 What Makes Hunyuan World Model Unique?

Hunyuan World Model 1.5 is built for real-time interaction with consistent 3D environments. Unlike traditional generative systems, it doesn’t just create visuals—it builds coherent worlds that evolve with user input.

It enables:

- 🎮 Interactive scene generation

- 🧠 Text-triggered dynamic events

- 🏗️ 3D reconstruction from data

- 🎨 Multi-style world creation

🧠 Core Innovations Behind the Model

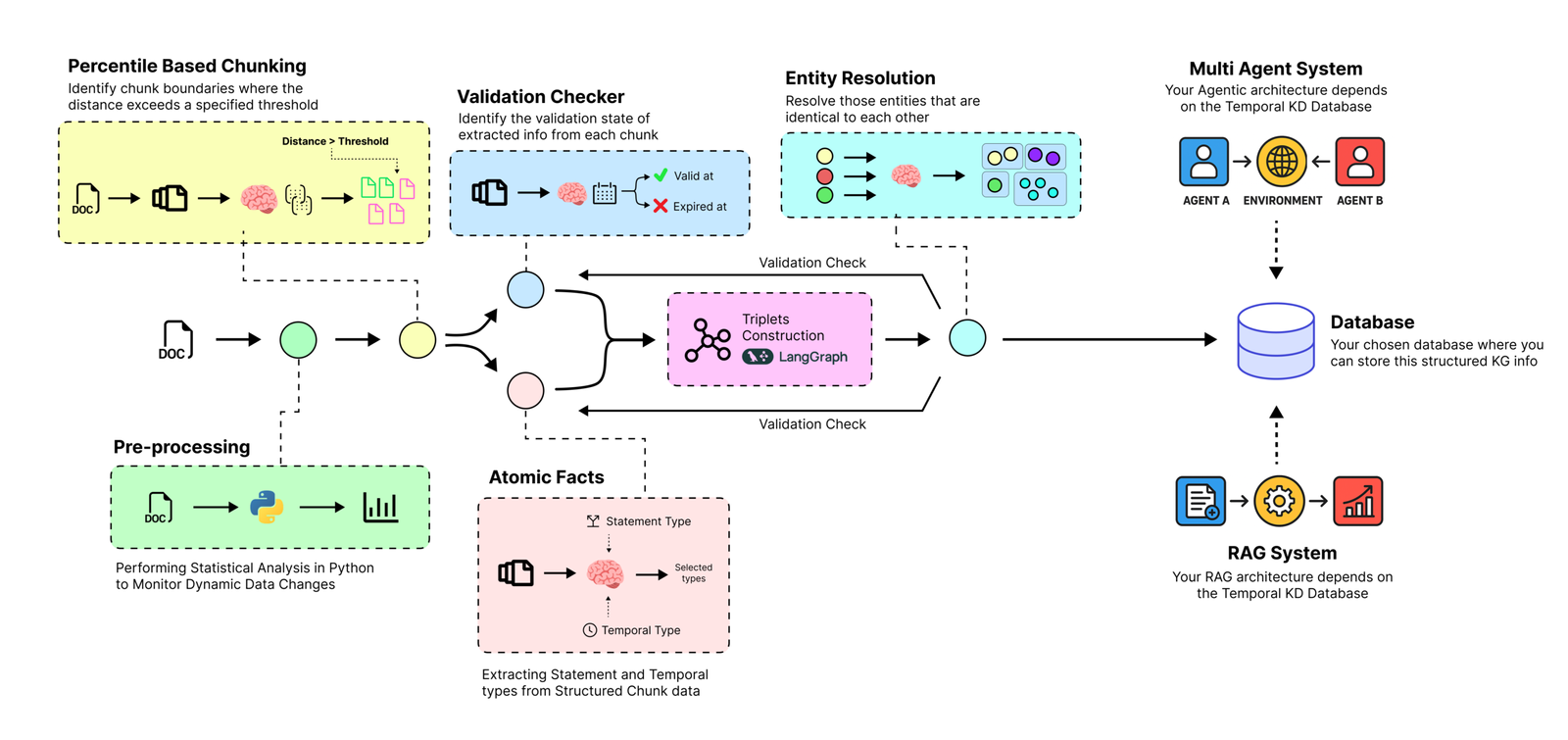

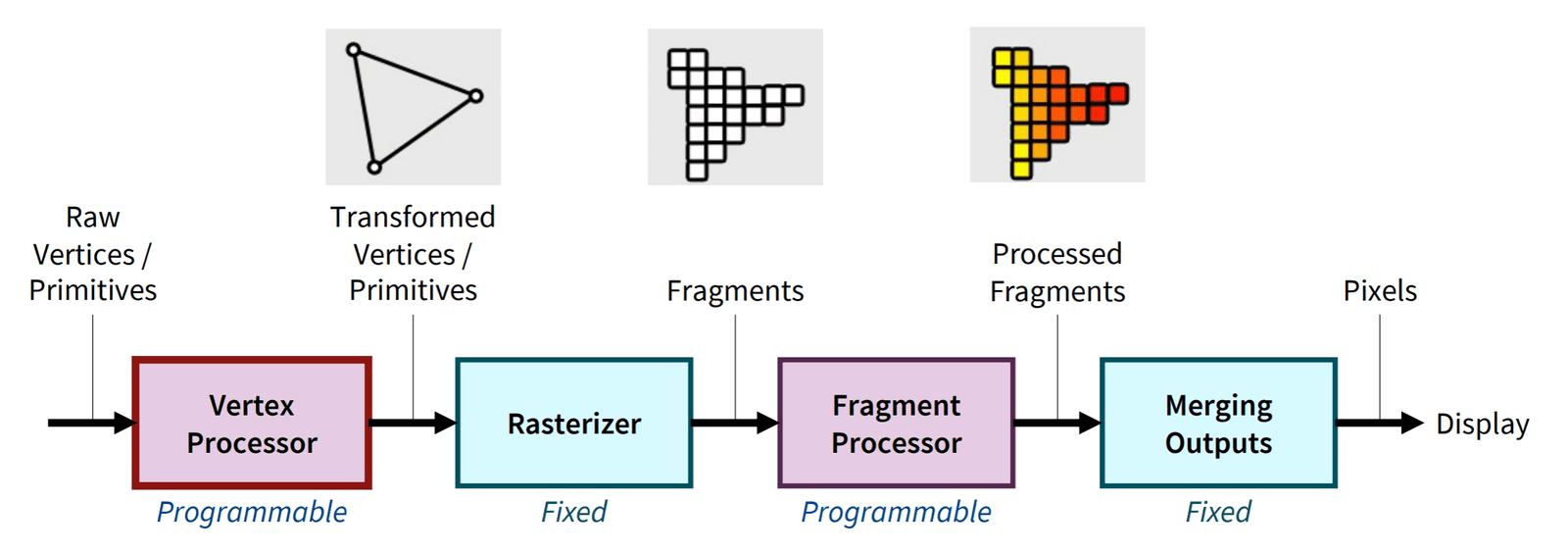

1. Precision Interactive Control Technology

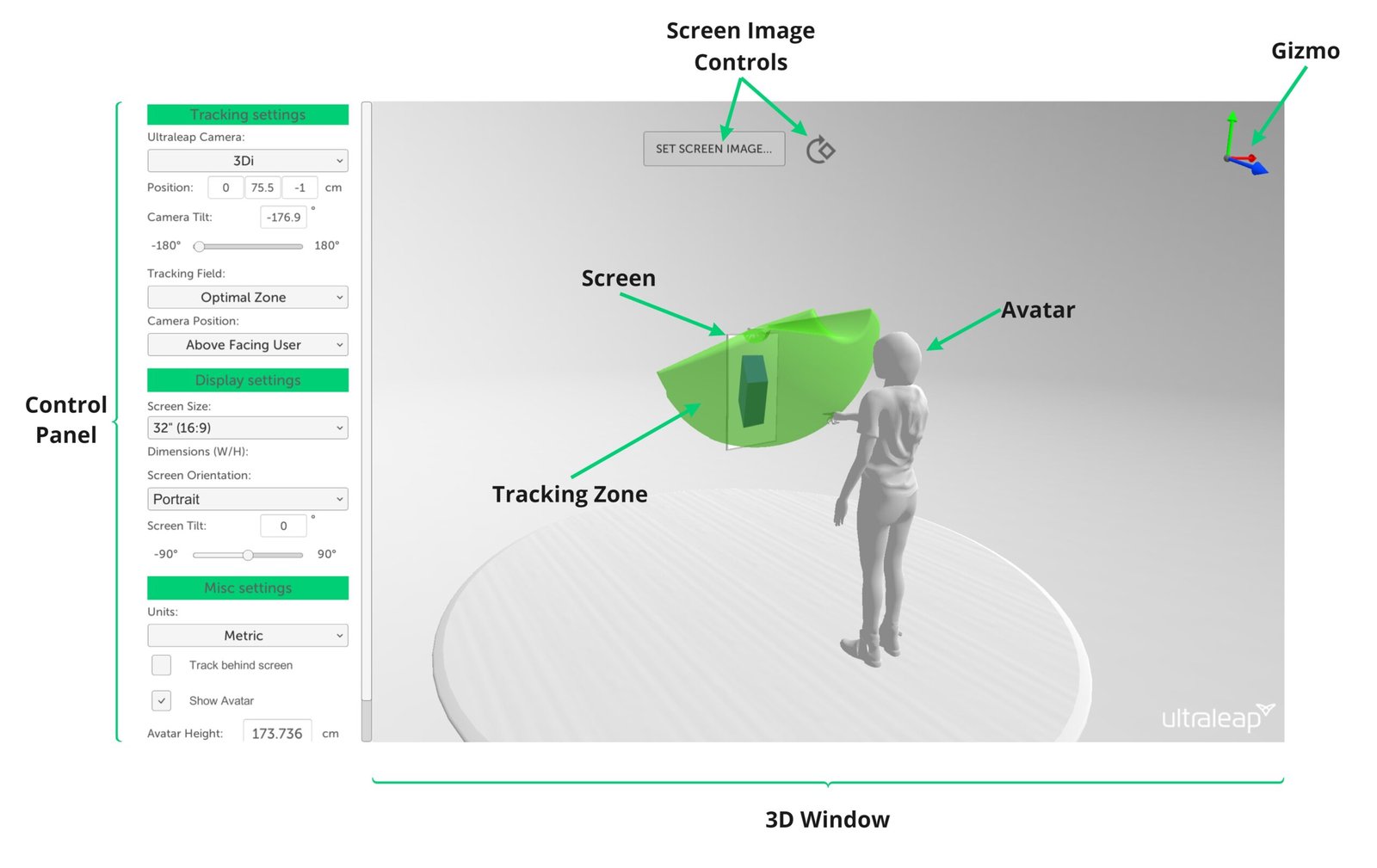

At the heart of Hunyuan is a dual-branch motion representation system:

- Combines 3D camera positioning with discrete control commands

- Improves spatial awareness using position priors

- Eliminates issues like:

- Control drift

- Slow convergence

- Scene scale inconsistencies

👉 This results in precise, stable, and intuitive control over generated worlds.

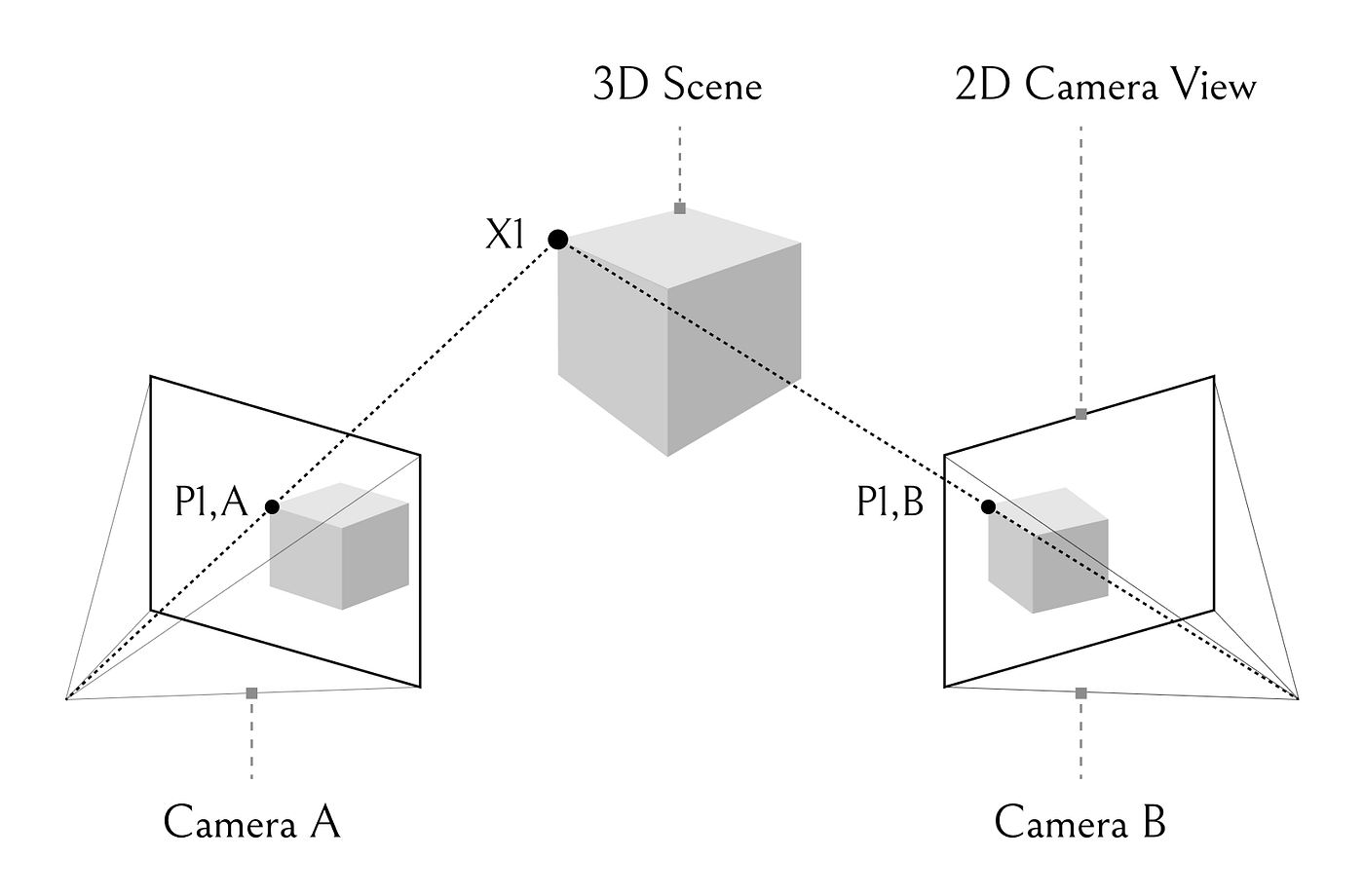

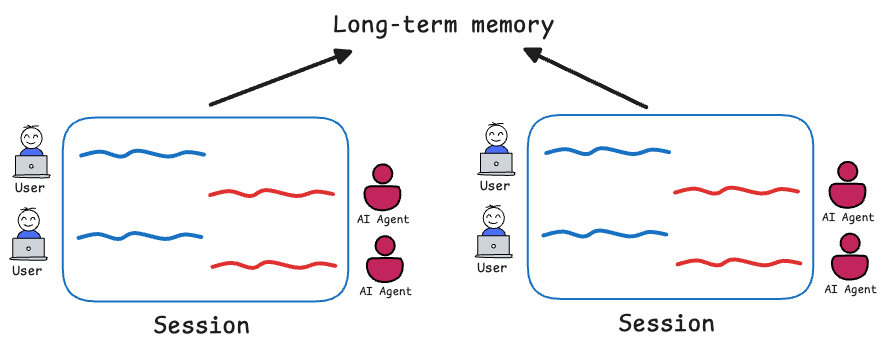

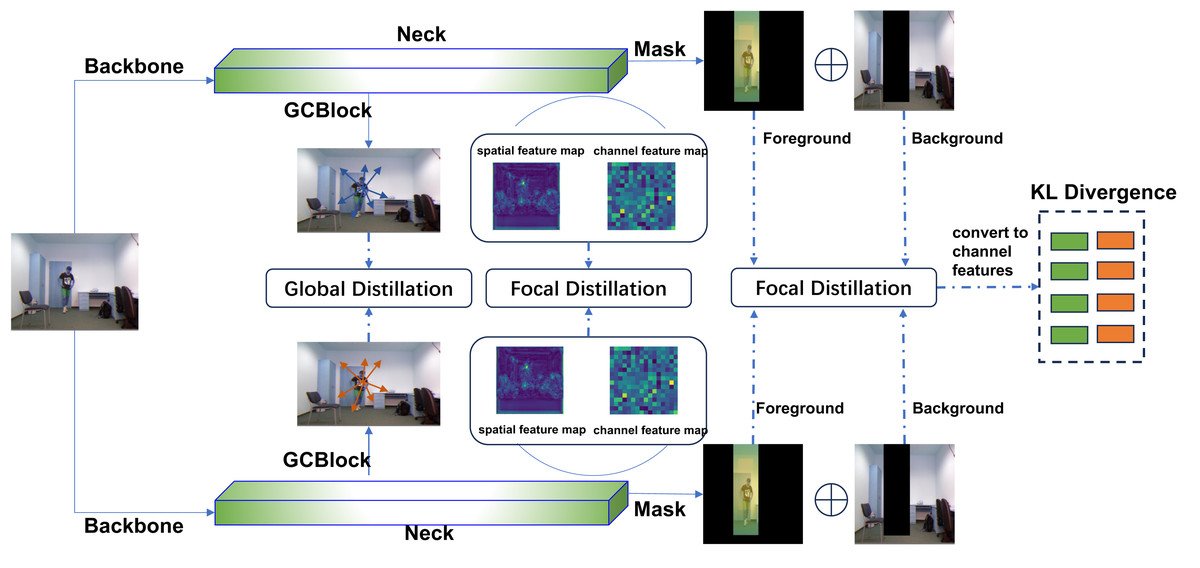

2. 3D Geometric Consistency Mechanism

Maintaining consistency in long sequences is one of the hardest challenges in generative AI—and Hunyuan solves it elegantly:

- Short-term memory → ensures smooth motion

- Long-term spatial memory → prevents geometry distortion

- Temporal reconstruction (RoPE encoding) → keeps past frames relevant

✨ The result: worlds that don’t “break” over time.

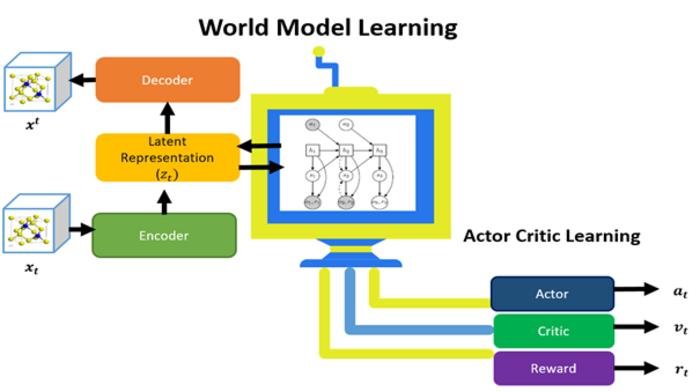

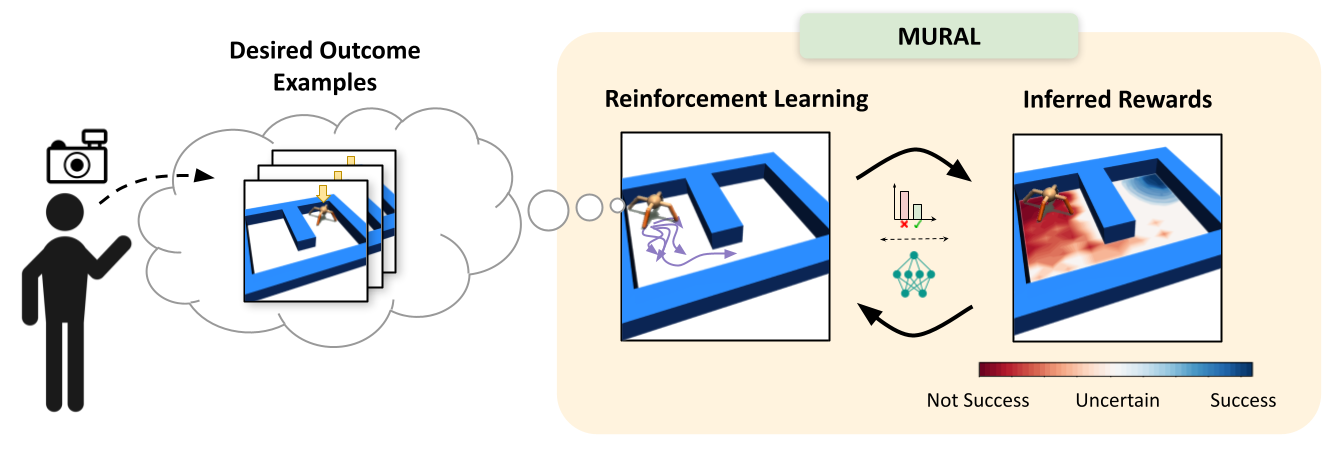

3. World Compass Reinforcement Learning Framework

Hunyuan introduces a custom RL system called World Compass:

- Improves action accuracy + visual quality

- Uses:

- Progressive rollout strategies

- Fine-grained reward systems

- Aligns training with autoregressive inference behavior

📈 Outcome: Faster learning + better real-time outputs

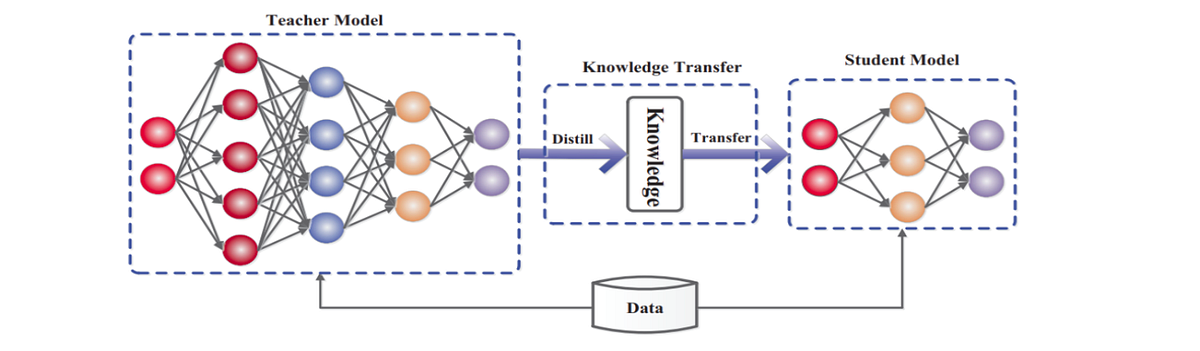

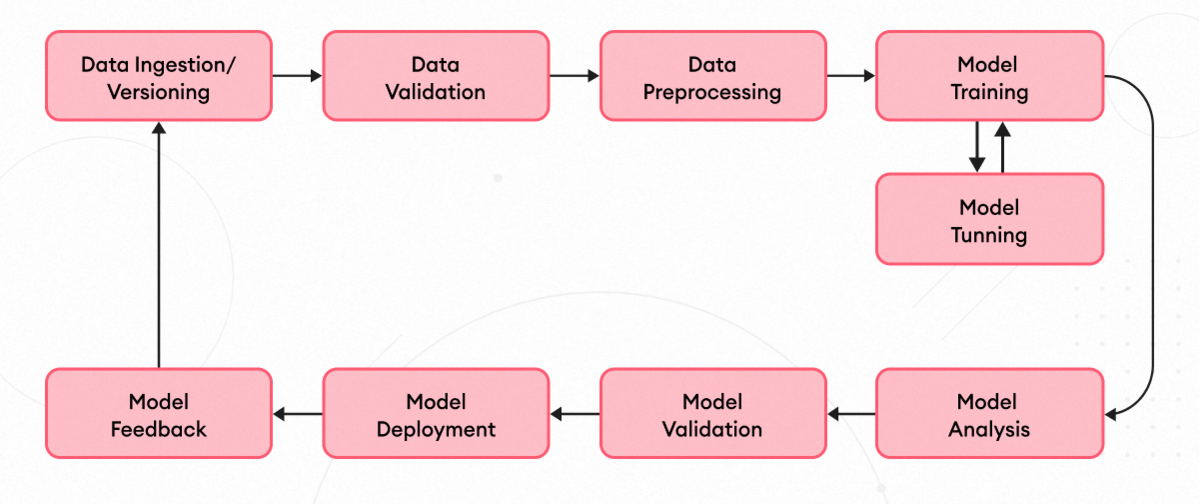

4. Efficient Model Optimization & Distillation

To balance speed and performance:

- Uses context-forcing distillation

- Aligns memory between teacher and student models

- Prevents:

- Error accumulation

- Model collapse (DMD issue)

⚡ Enables real-time performance without sacrificing quality

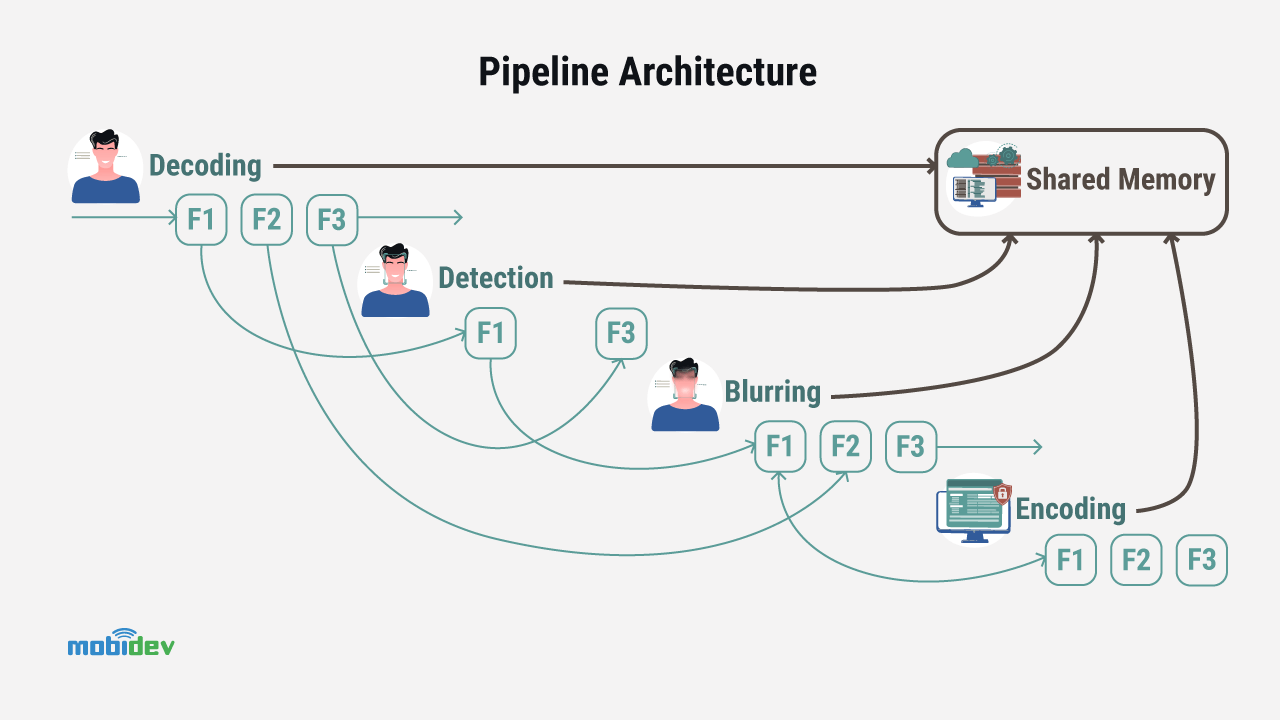

5. Real-Time Streaming Inference Engine

Built for real-world usage:

- Supports 720p resolution at 24 FPS

- Uses:

- Hybrid DiT + VAE parallel processing

- Streaming decoding

- Model quantization

🚀 Result: Low-latency, long-duration world generation

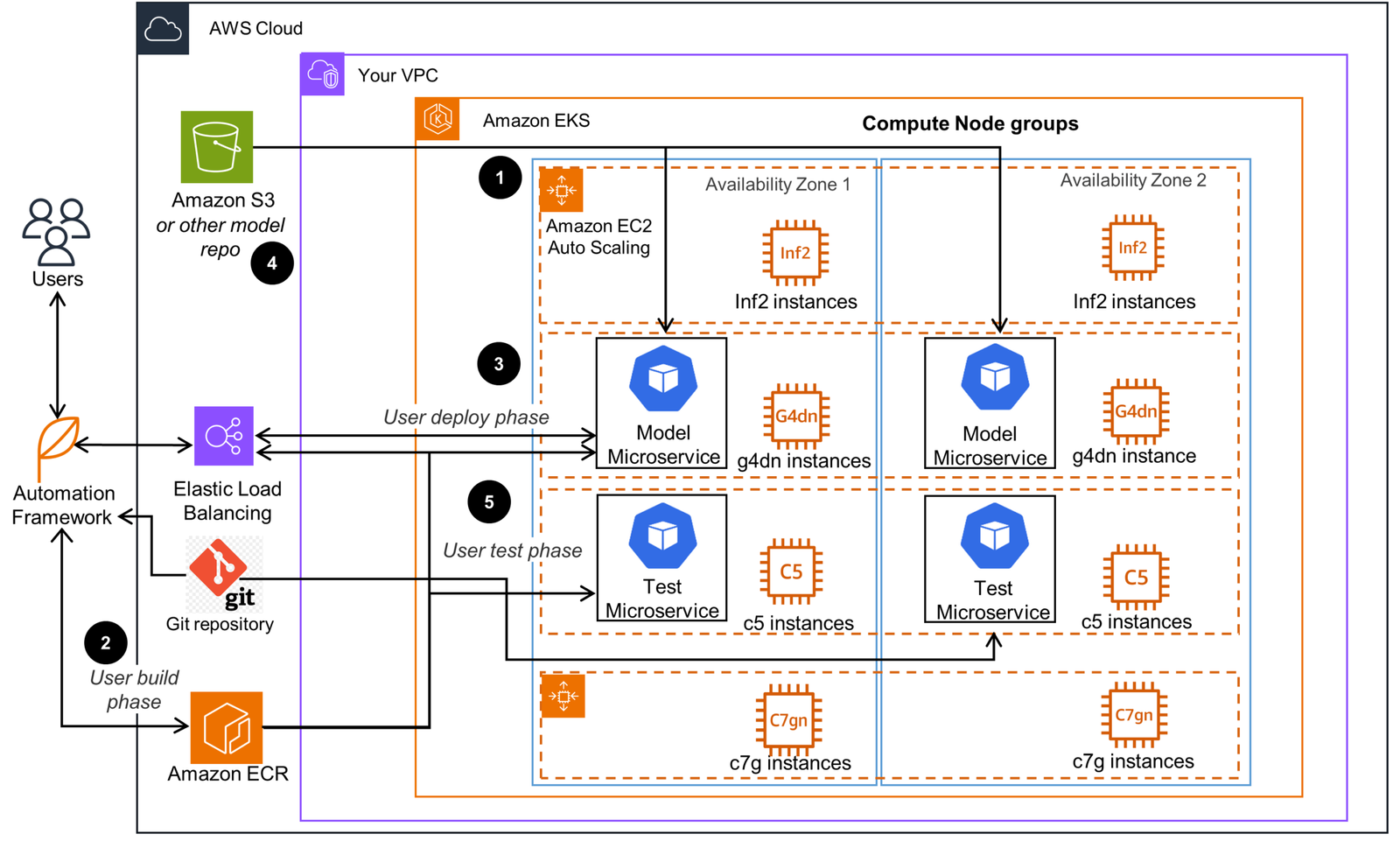

🏗️ Architecture Overview — HY-World 1.5

HY-World 1.5 is trained on a Next-Frame Prediction autoregressive task, meaning:

- It predicts how a world evolves frame by frame

- Maintains long-term geometric consistency

- Enables continuous interaction without resets

Key Pillars:

- Dual-branch action control

- Contextual memory reconstruction

- RL-based post-training

- Context-aligned distillation

Additionally, Tencent built a 3D scene-rendering pipeline to generate massive amounts of high-quality training data—boosting performance significantly.

🎥 What Can It Actually Do?

First-Person Perspective

Experience worlds like a player—fully immersive and responsive.

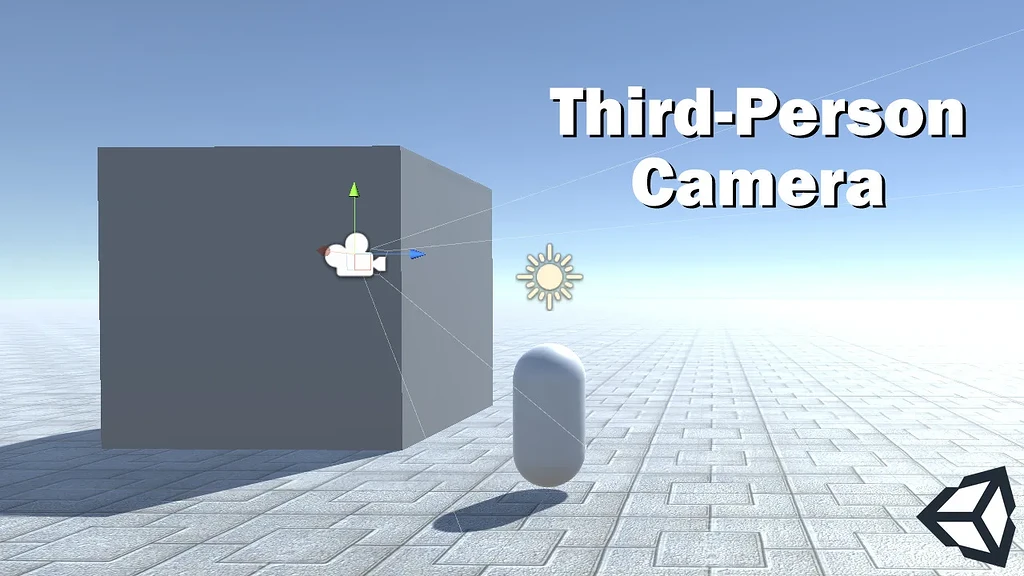

Third-Person Perspective

Observe environments from an external viewpoint—ideal for storytelling and simulations.

Stylized Scenes

Supports diverse visual styles—from realistic to artistic.

Real-Time Triggered Events

Generate events dynamically using:

- Text prompts

- User inputs

- Environmental conditions

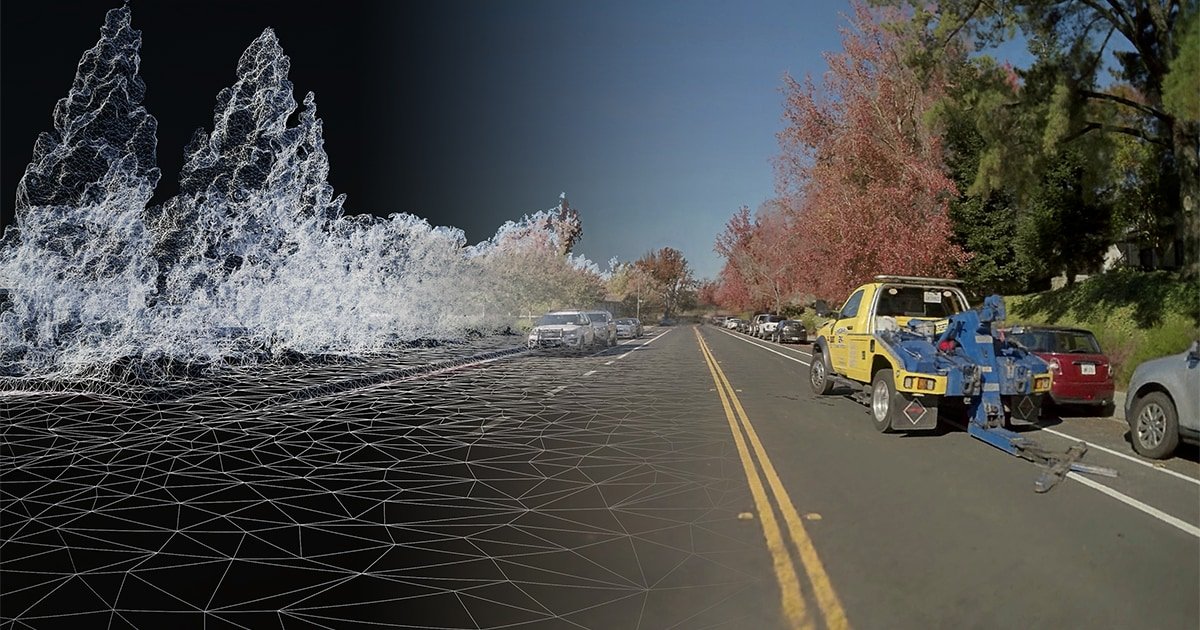

3D Reconstruction Capabilities

Turn data into high-fidelity, structured 3D environments.

🌐 Open Source & Ecosystem

Hunyuan World Model is backed by a growing ecosystem:

- 🔗 GitHub (codebase & contributions)

- 📄 arXiv (research paper)

- 🤗 Hugging Face (model access & demos)

- 🌍 “Experience it now” interactive playground

This makes it accessible for:

- Researchers

- Developers

- Game designers

- AI product teams

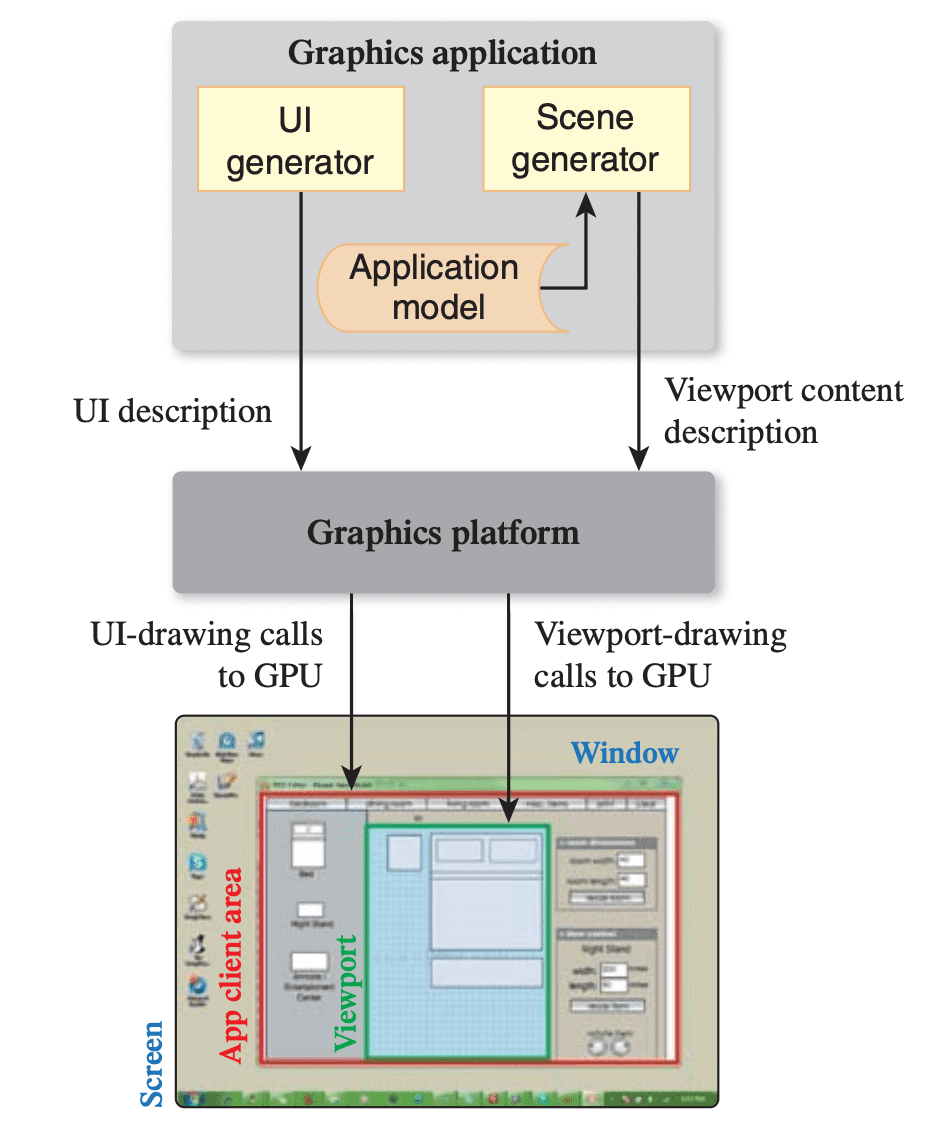

📌 Why This Matters for Designers & Builders

For UI/UX designers and product thinkers (especially in AI + SaaS):

- This shifts design from interfaces → environments

- Opens possibilities for:

- Spatial UX

- AI-native simulations

- Interactive storytelling

- Introduces new challenges:

- Designing for real-time unpredictability

- Balancing control vs autonomy

👉 It’s not just a tool—it’s a new design medium.

🧾 Citation

@article{hyworld2025,

title={HY-World 1.5: A Systematic Framework for Interactive World Modeling with Real-Time Latency and Geometric Consistency},

author={Team HunyuanWorld},

journal={arXiv preprint},

year={2025}

}

🔚 Final Thoughts

Hunyuan World Model 1.5 represents a major step toward AI-generated living worlds—where interaction, consistency, and realism coexist.

As AI continues to evolve, tools like this will redefine:

- How we design products

- How users interact with systems

- And how digital worlds are created

💬 Want to Explore More AI Design Tools?

If you’re building AI-first products or need a UX audit for your next-gen interface, feel free to reach out—I’d love to help you shape meaningful, future-ready experiences.